When determining the limit of an expression the first step is to substitute the value the independent variable approaches into the expression. If a real number results, you are all set; that number is the limit.

When you do not get a real number often expression is one of the several indeterminate forms listed below:

Indeterminate Forms:

They are called indeterminate forms because by doing some algebraic manipulation to simplify (or sometimes complicate) the expression its value can be determined. The calculus technique called L’Hôpital’s Rule may be used in some situations.

Part of the reason they are called indeterminate forms is that different expressions with the same indeterminate form may result in different values.

Before continuing with the discussion of indeterminate forms, I should point out that there are also determinate forms: expressions similar to those above that always result in the same value.

Determinate Forms and the values they approach:

Returning to indeterminate forms, textbooks contain examples and exercises that illustrate how to evaluate the indeterminate forms. Here are two examples illustrating a few of the techniques that can be used to evaluate them.

Example 1: .This is an example of the indeterminate form

. With exponents, logarithms may often be used to find the value.

The limit is now of the indeterminate form , so L’Hôpital’s Rule may be used. Continuing

So then ln(?) = 1 and ? = e1 = e and therefore

Example 2: is an example of the indeterminate form

.

Write the expression with a common denominator:

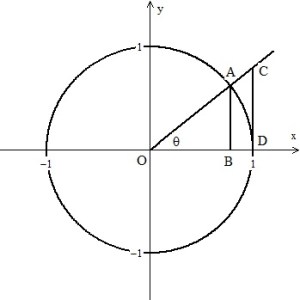

The second limit above is the definition of the derivative of . (L’Hôpital’s Rule may also be used with the second limit above.)

In fact, the definition of the derivative of all (any, every) functions, , gives indeterminate expressions of the form

.

.